Disclosure: We have lots of features in the system.

Full disclosure: We know you don’t use all these features.

We’re prepared to forgive you for that, but if you’re not creating A/B testing campaigns, we implore you to!

Every campaign manager and every content manager uses the features that suit them and that they’re used to working with.

Whether you’re used to working a lot with the important reports that analyze your campaigns or whether you focus on management of the recipients on your mailing list (we recommend doing both, of course)- for every campaign you send out from the ActiveTrail system, it’s important to do A/B testing.

What is A/B testing?

A/B testing, or A/B split, is technology that compares versions and that’s used in building web pages, creating landing pages, in applications, or in any other tool whose effectiveness and reception by the users you want to check.

In email marketing, this refers to sending two different versions of the email to a small group of recipients, with the winning version to be sent afterwards to the rest of your recipients.

A/B testing doesn’t demand more work on your part – on the contrary, it’s a tool that makes your work much easier. If you’re debating between any two characteristics of the campaign, you don’t need to debate it any further – the A/B testing will make the decision for you according to the outcome.

It’s really easy. This is how it’s done:

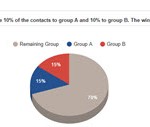

First of all, you choose the percentage of your recipients whom you wish to receive the A/B campaign. Remember, it’s a campaign that is already being sent to your customers, and not a trial campaign, so when the A/B is sent, it’s already completed and ready.

So, for example, if you chose for your A/B to be sent to 30% of the recipients, 15% will receive campaign A and 15% will receive campaign B. If you chose to send the test to 20% of the recipients, 10% will receive campaign A and 10 % will receive campaign B, and so on.

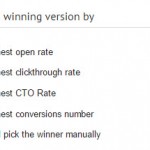

After you send the A/B campaign to your chosen percent of recipients, you decide how and according to which criteria the winning campaign will be determined.

You can pick the winning version according to the following options:

Clickthrough rate – the percent of clicks out of all the emails sent

CTO rate – the percent of clicks out all the emails that were opened

If you pick one of the first four options, after a time interval that you determine the system picks the winning campaign for you automatically according to the options you set and sends it out automatically.

If you’re picking the winner manually, you’ll have to log in to the system again a specified amount of time after the campaign was sent, and to send the campaign you’ve picked as the winner.

What can you use A/B testing on?

Simply everything. You don’t know what header will get the highest number of openings? Try two different headers.

Wondering which sending profile will get your recipients to open your email? Try two different profiles and let the data choose for you.

Here’s a brief survey of how to do A/B testing on a number of common elements:

The email subject line

Your subject line is a key element in your email campaign. Make two versions of the same exact email with two different subject lines.

Choose the percent of the recipients who will get the A/B test (the recipients are chosen randomly by the computer) and send. In ‘choose the winning campaign by’, check ‘highest open percent’ and the email with the more effective subject line is the one that will be sent to most of your customers.

Not sure if you want to use personalization in the subject line? Do an A/B test.

The ‘from’ line

From whom do you want your email to be sent? From the company? From the CEO? From the marketing director?

Try two different possibilities in the A/B campaign and determine that the email with the highest open percent wins.

This is how it looks in the system:

Design

You have the option of designing two totally different emails in the A/B campaign and seeing which email gets the most clicks or conversions. But if you want to understand and to draw conclusions from the A/B testing campaign for future emails, too, then it’s worthwhile to check a separate element each time.

You can choose to do an A/B campaign about the colors of the email, the graphics, or a specific picture you want to include in the article. Check which email gets the most clicks.

Call to action

Design two emails with two different texts for the call to action and check with A/B testing which of them gets a higher clickthrough or CTO rate.

Content

You can also check your content with A/B testing. Choose two different texts for the email and check which of them generates more clicks/conversions.

Facts about A/B testing that are good to know

- 44% of companies use an A/B testing program (It should be 100%) (Seogadget)

- President Obama raised $60 million thanks to A/B testing (event 360)

- Gmail once checked 50 different hues for its Call-to-Action button and eventually found the most converting one! (QuickSprout)

We’ve surveyed only part of the operations you can do with A/B testing. There are endless possibilities and things you can check using this technology, from the level of a comma in your subject line that affects your email’s open rates up to the level of checking which article will make the customer who receives your email continue on to your website, sign up for your service, and become your lifelong customer.

Check out more of our great features

Read more in the ActiveTrail blog